Lands your AI agent at the right code in fewer turns, tokens, & breakages.

A local intelligence layer that sits between your AI agent and your codebase — indexes every call, remembers every decision, and gets sharper the longer you use it.

One global install, then unerr install <agent> per repo. No account. No keys.

The problem

The agent isn't stupid. It's flying blind.

Watch any AI coding session for ten minutes and you'll see the same loop.

Reads 30 files to find one function

Burns the context window before it writes a line.

Edits something with 40 callers

Never knows it just broke three services.

Re-derives conventions you taught yesterday

And this morning. And an hour ago.

Forgets the entire session

The moment the context window closes.

Every one is the same root cause: no persistent memory of your code, your team's style, or its own past mistakes.

unerr is that memory.

What unerr changes

One process, fully local —

indexed in seconds.

Six mechanisms run in the background the moment your agent connects via MCP. No agent re-training, no prompt tweaks, no signups.

Graph-guided navigation

get_entity · get_references · get_imports · search_code in <5ms. The agent stops reading 30 files to find one function.

Reduces wasted file reads · ~70% fewer turns to land

Targeted file reads

file_read({ entity: "fnName" }) returns just that function plus relevant conventions and facts — never the whole 2,000-line file.

Lower context cost per turn

Shell output compression

11 strategies, 645+ command classifiers. Diffs, errors, logs, test runs, YAML — each compressed differently.

93% avg compression · 2 MB → 138 KB

Persistent memory

record_fact + recall_facts with decay-adjusted confidence. Conventions, decisions, and anti-patterns survive across sessions.

Cross-session memory · no starting from zero

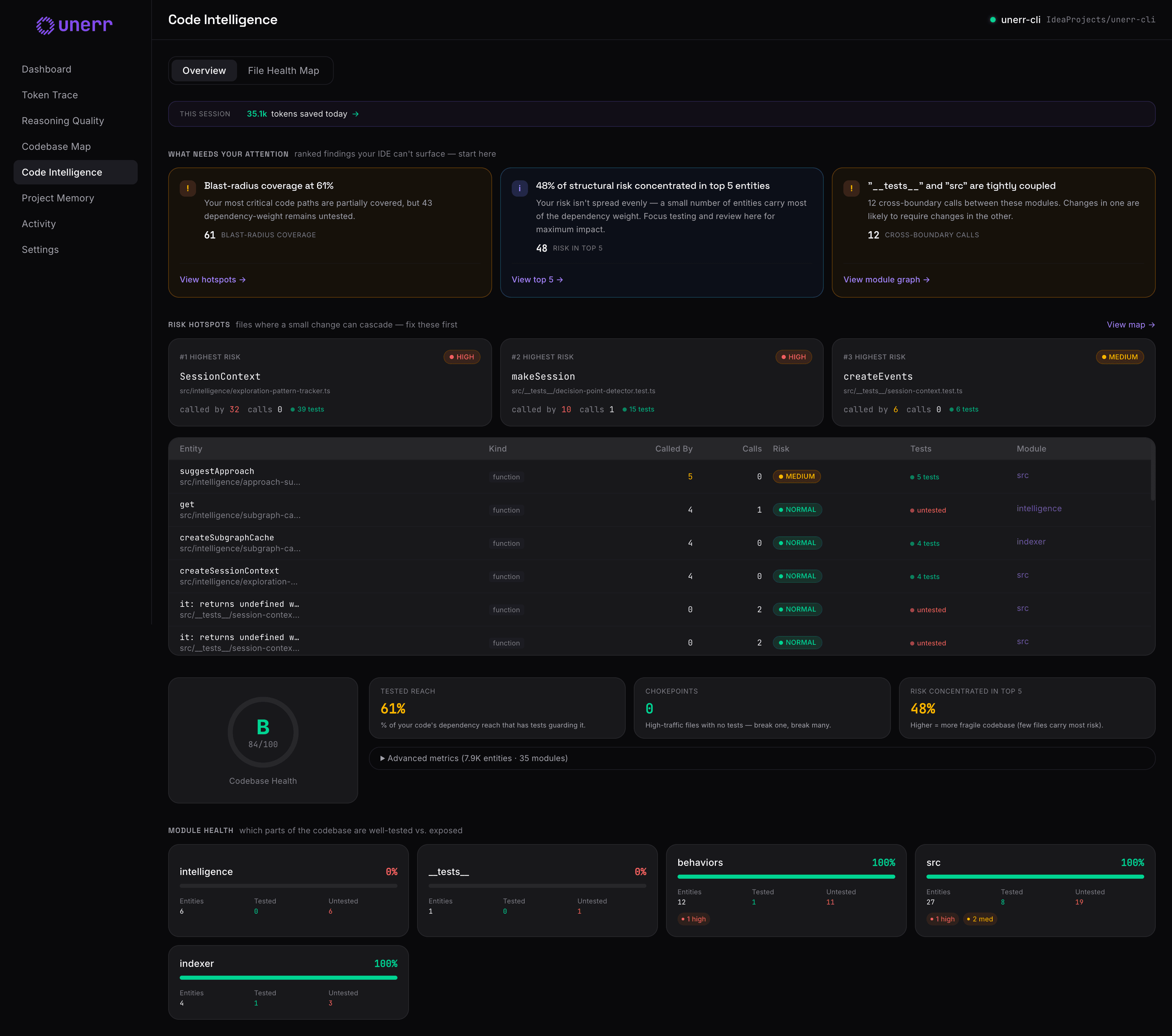

Blast radius before edits

get_references surfaces every caller before a change. No more confident wrong edits that ripple across services.

Fewer breakages · safer refactors

Local-first

Two processes, one local DB. Zero network calls. No API keys. No cloud. Your code never leaves the machine.

0 network calls · ELv2 license

The proof

Every claim is a tool call your agent just made.

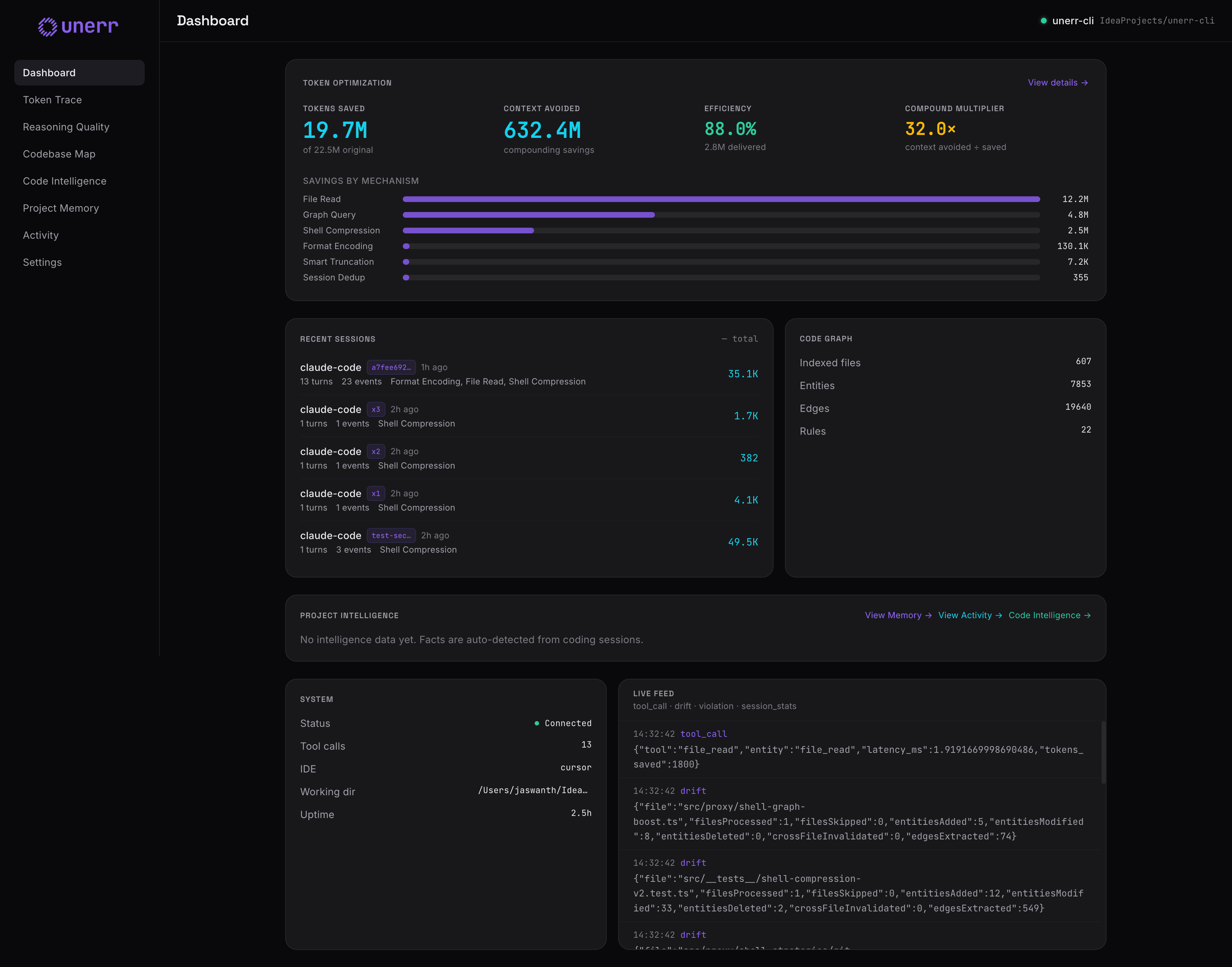

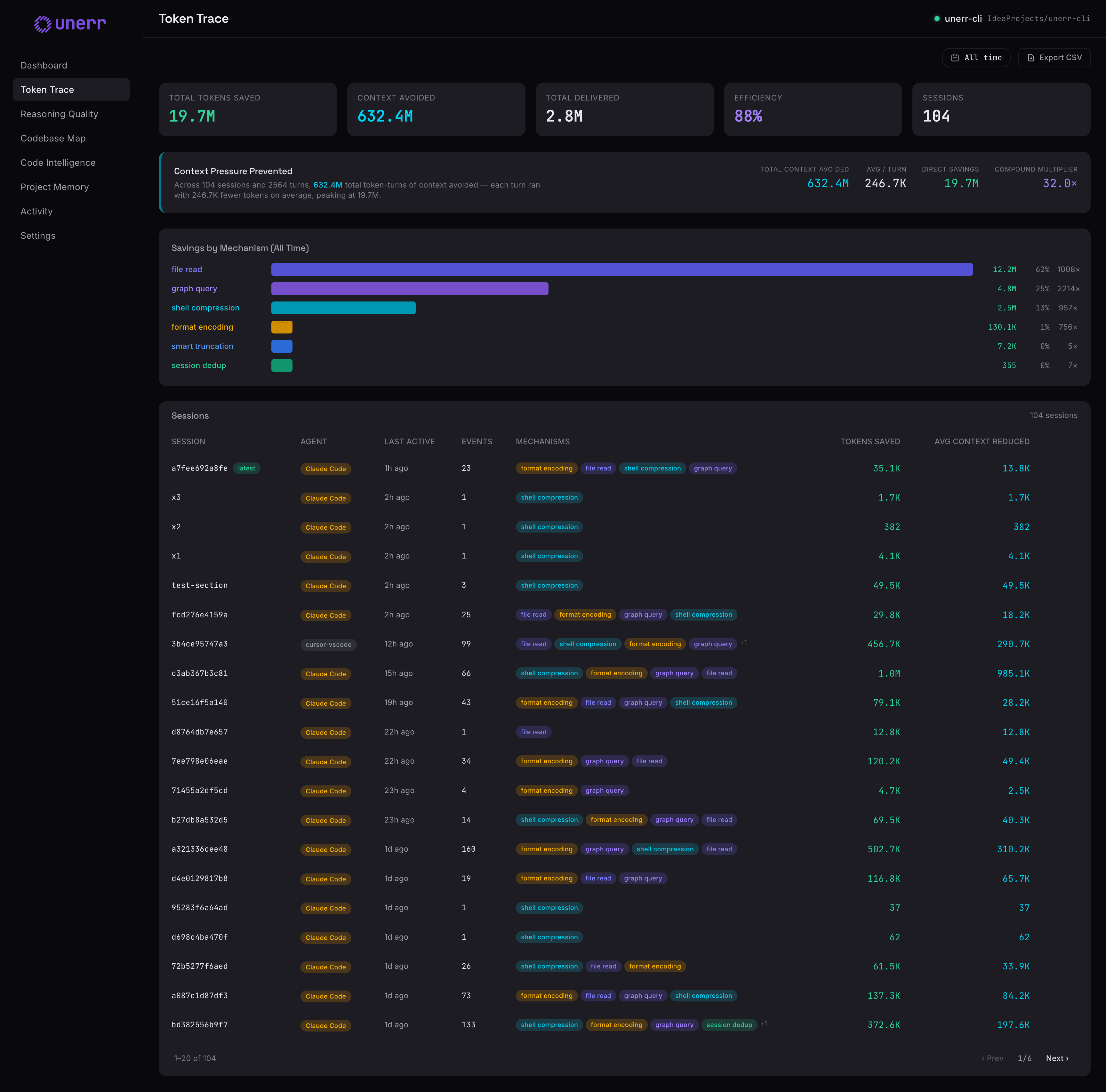

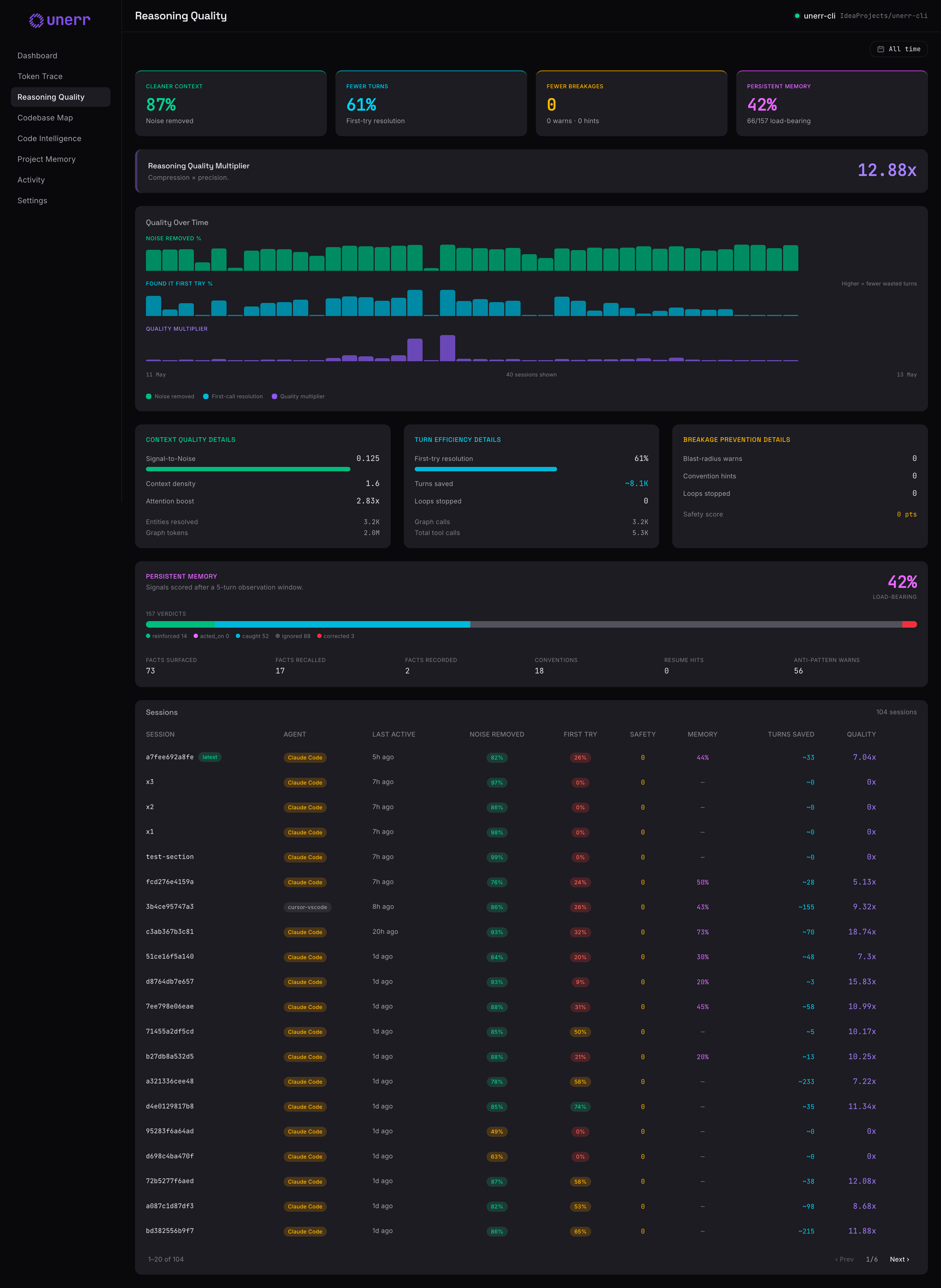

Open the dashboard and watch unerr's effect on the current session in real-time. Four live panes, all backed by an append-only ledger.

Shell compression

11 strategies. 645+ command classifiers.

| Strategy | Targets | Compression |

|---|---|---|

| diff | git diff, patch output | 99% |

| structured | JSON APIs, docker inspect | 97% |

| progress | npm/pip install | 95% |

| log_text | build logs, cargo build | 89% |

| test_results | vitest, pytest, playwright | 80% |

| tabular | ps aux, docker ps, kubectl get | 77% |

| error_diagnostic | tsc, eslint, rustc | 72% |

| tree_paths | find, tree, ls -R | 42% |

Quick start

From zero to a smarter agent in under a minute.

Four explicit steps. Per-repository. No accounts, no API keys, no external dependencies.

- 1

Install the CLI globally

Single global install. Verify with unerr --version.

- 2

cd into your repo root

Everything unerr writes is scoped to the current directory — .mcp.json, .claude/, .unerr/. Run install from the repo root.

- 3

Install for your coding agent

Writes MCP config, drops bundled skills, injects a tool-preference section, and installs hooks where supported.

→ writes .mcp.json · CLAUDE.md · .claude/skills/ · hooks

- 4

Run unerr — and keep it running

The daemon owns the graph, file watcher, drift detection, and behavior automation. ~80–200 MB, idles at near-zero CPU. Leave it running.

Need a different agent? Run unerr install --show-instructions <agent> — 16 agents supported (6 fully integrated, 10 in progress).

MCP surface

19 graph-aware tools. One MCP server.

Every tool returns sub-5ms responses with inline ur| signals for drift, blast-radius warnings, and circuit-breaker halts.

Graph Intelligence

8get_entityEntity — signature, body, callers, callees, riskget_fileAll entities in a file with risk summaryget_referencesCallers (blast radius) or callees (dependencies)get_importsImport graph for a filesearch_codeGraph-ranked full-text searchget_conventionsNaming, structure, import patternsget_critical_nodesHigh fan-in/fan-out chokepointsget_cross_boundary_linksUnexpected cross-module deps

Structural Analysis

3get_project_statsEntity counts, risk distribution, health gradefile_connectionsImports + co-change correlationsget_test_coverageDirect + transitive tests for any entity

File Protocol

2file_readContext-aware read — auto-injects conventions and factsfile_outlineFile structure without reading the body

Persistent Memory

2record_factPersist a convention, decision, or anti-patternrecall_factsHierarchical scope + decay-adjusted confidence

Session Narrative

4mark_intentOne-sentence task start. Becomes the turn titlemark_decisionRecords a chosen approach + alternativesmark_blockerFlags an unresolved obstaclemark_resolutionResolves a prior blocker by marker_id

Every response includes _meta (latency, risk level, drift status). Inline ur|<tag> signals surface high-priority guidance directly in the response body.